There is a generation of software developers currently entering the workforce who will be the last to learn programming the way it has been taught for fifty years. They will be the last to sit in lecture halls writing sorting algorithms by hand. The last to grind through LeetCode problems as interview preparation. The last to build their professional identity around the ability to translate logic into syntax.

This is not a prediction. It is an observation about what is already happening.

Employment for software developers aged 22 to 25 has declined nearly 20% from its peak in late 2022 to mid-2025. Stanford’s Digital Economy Lab tracked this across 3.5 to 5 million workers monthly and found a pattern that is hard to misread: companies are hiring dramatically fewer junior developers, and the ones they do hire are entering a profession that is mid-metamorphosis.

Meanwhile, employment for developers aged 30 and older in high-AI-exposure fields grew 6% to 12% over the same period. The industry is not shrinking. It is restructuring. And the restructuring favors experience, judgment, and orchestration skill over the ability to write code from scratch.

The Bureau of Labor Statistics still projects 15% growth in software jobs through 2034. That projection is probably right, but the jobs it describes will bear little resemblance to the jobs that exist today. The demand is not for people who can write code. It is for people who can specify outcomes, direct agents, validate results, and make the judgment calls that agents cannot.

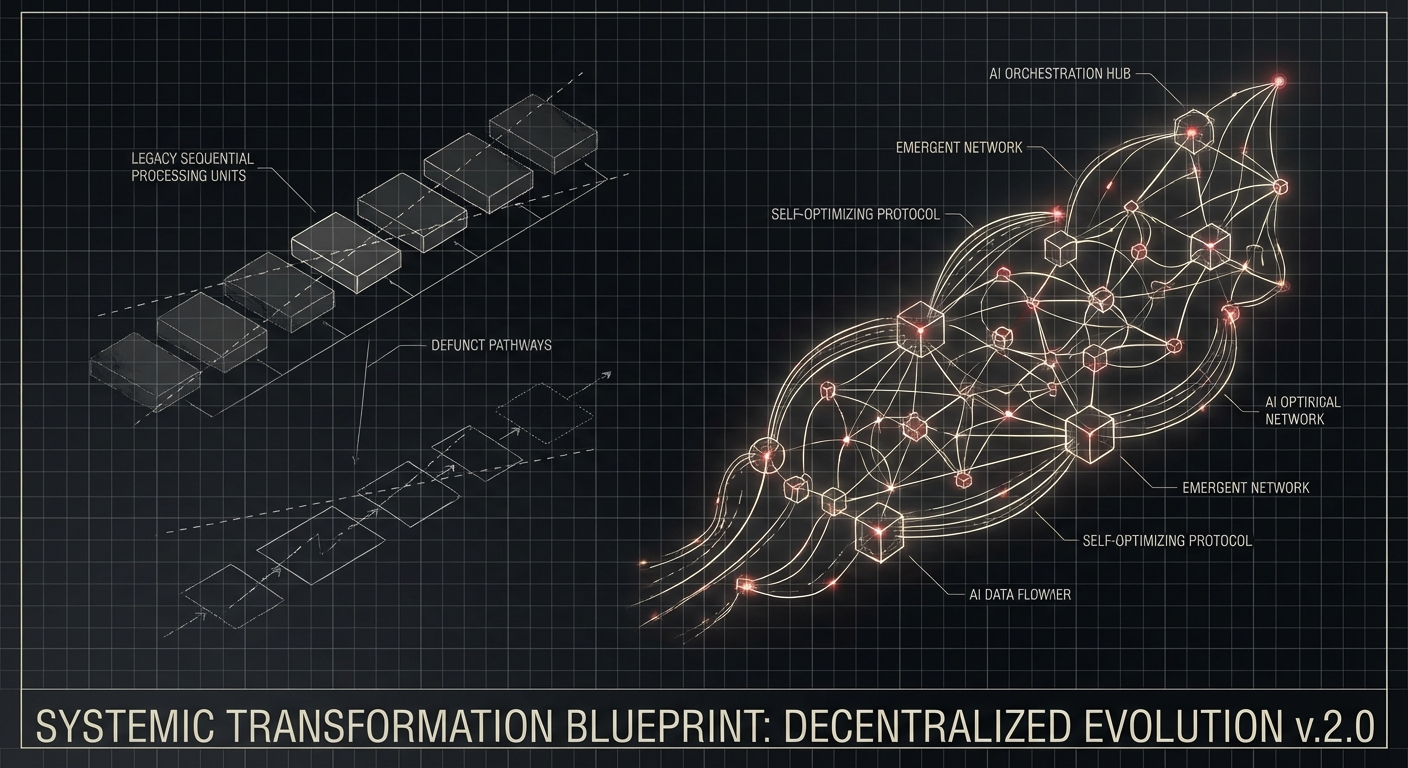

We are witnessing the end of coding as the primary activity of software engineering. What replaces it is something we have been calling orchestration, and it demands a fundamentally different set of skills.

The Adoption Curve Has Already Bent

The data on AI coding tool adoption has moved past the early-adopter phase and into the mainstream.

92% of US developers now use AI coding tools daily. 57% of organizations deploy multi-step agent workflows. Anthropic’s 2026 Agentic Coding Trends report found that developers use AI in 60% of their work, though they fully delegate only 0% to 20% of tasks. The gap between usage and delegation is where the profession currently lives: AI is everywhere, but the model of a human directing the AI rather than the AI working independently still predominates.

That gap is closing fast. Nearly nine out of ten developers report saving at least an hour per week with AI tools. One in five saves eight or more hours, a full workday. Among those building production software with AI, 49% report saving six or more hours weekly.

The productivity gains are real and measurable. Salesforce reported a 30% productivity increase from AI tools across their engineering organization, prompting CEO Marc Benioff to announce that the company would hire no more software engineers in 2025. Instead, they would hire more salespeople. Benioff’s framing was blunt: Salesforce would be among the last companies to manage only humans.

This is not one CEO’s idiosyncrasy. It reflects a structural reality that 54% of engineering leaders confirmed in a 2025 survey: they plan to hire fewer junior developers because AI copilots enable senior engineers to handle more work directly. Entry-level tech hiring decreased 25% year-over-year in 2024. 50% fewer fresh graduates were hired by big tech over the past three years.

The numbers describe a profession that is rapidly shedding its entry-level tier while expanding demand for senior practitioners who can leverage AI effectively. This has consequences that extend far beyond hiring numbers.

The Junior Developer Crisis

Every profession depends on its entry level as a talent pipeline. Junior lawyers do document review. Junior doctors do residencies. Junior developers write CRUD endpoints and fix bugs. These entry-level activities serve two functions: they produce useful work, and they train the next generation of senior practitioners.

AI is eliminating the useful work function without providing a replacement for the training function. Agents can write CRUD endpoints faster and more reliably than junior developers. They can fix routine bugs, write tests, and generate boilerplate without supervision. The tasks that organizations historically assigned to junior developers, the tasks that taught them how production systems work, are being automated away.

This creates a pipeline problem. If junior developers do not get hired, they do not gain the experience that produces senior developers. If senior developers are not produced, the supply of the experienced practitioners that the AI-augmented model depends on eventually runs dry. The industry is consuming its seed corn.

Some organizations are aware of this and are deliberately protecting junior-level work for talent development purposes even when AI could handle it more efficiently. This is the right instinct, but it requires organizational discipline that runs counter to the immediate economic incentive. Every manager facing budget pressure and headcount targets will find it hard to justify hiring a junior developer at $100,000 per year to do work that an AI agent can do for $100 per month, even if the long-term talent pipeline argument is sound.

The resolution will likely involve a fundamental redesign of how junior engineers are trained. Instead of learning by writing code, they will learn by specifying outcomes, reviewing agent output, debugging agent failures, and gradually taking on the orchestration responsibilities that define the senior role. The apprenticeship model will shift from “write code under supervision” to “direct agents under supervision.” But this new apprenticeship model does not yet exist at scale, and the industry is in the gap between the old model failing and the new model emerging.

Vibe Coding: Promise and Peril

Into this gap has stepped a phenomenon that Andrej Karpathy named “vibe coding” in February 2025. The term became Collins Dictionary’s Word of the Year. Searches for it spiked 6,700%. It captured something real about how software development is changing: the ability to build functional applications by describing what you want in natural language and letting AI figure out the implementation.

Vibe coding has democratized software creation in ways that are genuinely valuable. Non-technical founders can prototype products. Domain experts can build tools without learning to program. The barrier to creating software has dropped from years of education to an afternoon with an AI coding assistant.

But vibe coding has a dark side that the industry is only beginning to reckon with.

A METR study found that applications built purely through vibe coding were 40% more likely to contain critical security vulnerabilities than applications built with traditional methods. Veracode’s GenAI Code Security Report found that 45% of AI-generated code introduces common OWASP vulnerabilities. Testing of five major vibe coding tools across fifteen test applications found 69 vulnerabilities, with roughly a half-dozen rated critical.

AI-co-authored pull requests showed 2.74 times higher rates of security vulnerabilities than human-authored pull requests. Palo Alto Networks considered the risk significant enough to release a dedicated Vibe Coding Security Framework.

The irony is pointed: Karpathy himself hand-coded a subsequent project rather than vibe-coding it, a tacit acknowledgment that the approach has limits.

The problem is not that AI writes bad code. Modern AI models write perfectly competent code for well-defined tasks. The problem is that vibe coding removes the human understanding that would catch the code’s blind spots. When a developer writes code, they develop a mental model of the system’s behavior, its edge cases, its failure modes, its security boundaries. When a vibe coder describes what they want and accepts whatever the AI produces, that mental model never forms. The code works, until it does not, and when it fails, nobody understands why.

This is the distinction between vibe coding and what we practice as AI-native engineering. AI-native engineering uses agents extensively, but it maintains human judgment at the boundaries: specification, architecture, security review, validation. The human understands what the system is supposed to do and verifies that it does it. Vibe coding skips this step, and the consequences range from bugs to breaches.

The Orchestrator Paradigm

What replaces the traditional developer role is not a diminished version of the same job. It is a genuinely different job that happens to share some skills with the old one.

The orchestrator’s primary activities are:

Specification engineering. Defining what needs to be built with enough precision to drive agent execution. This requires deep domain understanding, the ability to anticipate edge cases, and skill in structured communication. It is harder than writing code, because writing code allows you to hide ambiguity behind implementation details. A specification must make every decision explicit.

Context engineering. Assembling and curating the information agents need to produce good output: domain models, architectural patterns, codebase conventions, historical decisions and their rationale. The quality of context determines the quality of agent output. Two teams with identical AI tools will produce dramatically different results based on the quality of context they provide.

Agent direction. Choosing which agents to use for which tasks, configuring their parameters, managing their execution across parallel workstreams, and intervening when they go off track. This is operational skill, closer to project management than to programming, but it requires enough technical depth to evaluate what the agents are doing.

Output validation. Reviewing agent-generated code and systems against specifications, identifying gaps, catching errors that automated testing misses, and making judgment calls about quality, security, and architectural fitness. This is the most critical human function in AI-native development, and it is where experience matters most. You cannot validate what you do not understand, and understanding comes from years of building systems.

Architecture and design. Agents are effective implementers but unreliable architects. System architecture, the decisions about how components interact, how data flows, how failure is handled, how the system scales, remains a fundamentally human responsibility. Agents can propose architectural options, but evaluating them requires judgment about trade-offs that only experience provides.

The common thread across all of these activities is that they require judgment, not typing. The orchestrator’s value is not in the volume of code they produce but in the quality of the decisions they make. This is a role that rewards experience disproportionately, which explains why senior developer employment is growing while junior developer employment is shrinking.

The Bottleneck Shift

The most underappreciated consequence of AI-augmented development is the shift in where bottlenecks occur.

In traditional development, the bottleneck was implementation. There was more to build than there were developers to build it. Every optimization, faster languages, better frameworks, more efficient processes, was aimed at increasing the speed of code production.

With AI agents handling implementation, the bottleneck has moved. The new bottlenecks are:

Code review. Agents produce code faster than humans can review it. When an agent generates a complete feature implementation in ten minutes, the review that ensures it is correct, secure, and architecturally sound takes longer than the implementation. Organizations that do not invest in review processes and tooling will either slow down to human review speed or deploy unreviewed code. Neither is acceptable.

Testing and validation. Agents can generate tests, but validating that the tests are meaningful, that they cover the right scenarios, and that passing tests actually indicate correctness, requires human judgment. The testing bottleneck is not writing tests. It is designing test strategies that provide genuine confidence.

Infrastructure and deployment. Agents can produce working code, but getting that code into production safely, managing environments, handling database migrations, configuring monitoring, managing secrets, requires operational expertise that agents are less reliable at. DevOps and platform engineering have become more important, not less, as AI accelerates the rate of code production.

Specification clarity. The quality of agent output is limited by the quality of the specification. Vague, ambiguous, or incomplete specifications produce vague, wrong, or incomplete implementations. The bottleneck is not “can we build it” but “have we defined it precisely enough to build it correctly.” This shifts the critical path from engineering to product thinking: understanding the problem deeply enough to specify the solution precisely.

These new bottlenecks demand different skills than the old ones. They demand review skill, testing judgment, operational expertise, and product thinking. They are all activities where experience compounds and where AI augments rather than replaces human capability.

What This Means for Engineering Organizations

The implications for how engineering organizations are structured, staffed, and managed are significant.

Flatter hierarchies, smaller teams. When a senior engineer directing agents can do the work of a five-person team, the optimal team size shrinks. Organizations will move toward small, senior-heavy teams with deep domain expertise rather than large, pyramid-shaped organizations with layers of junior and mid-level developers.

New role definitions. Titles like “AI Orchestrator” and “Killswitch Engineer” are emerging to describe roles that did not exist two years ago. The Killswitch Engineer is responsible for monitoring agent behavior and intervening when it goes wrong, a role that requires deep technical understanding combined with operational judgment. These roles reflect a genuine shift in what organizations need, not just rebranding of existing positions.

Investment in review infrastructure. Organizations that take quality seriously will invest in tooling and processes that make code review faster and more effective. This includes AI-assisted review tools, specification-linked diff views, automated security scanning, and structured review workflows. The review function is the quality gate for the entire AI-native development process, and underinvesting in it is the fastest way to accumulate technical debt and security vulnerabilities.

Deliberate talent development. Organizations that want to produce the next generation of senior engineers will need to create structured development programs that teach orchestration skills: specification writing, context engineering, agent management, output validation. These programs cannot be ad hoc. They need to be as intentional and structured as the residency programs that produce doctors, because the skills they teach are equally critical and equally difficult to acquire.

Compensation restructuring. When individual engineers can produce 5x to 10x the output they could produce without AI, compensation models based on market rates for generic “software engineer” titles become inadequate. Compensation will increasingly reflect the leverage that skilled orchestrators provide: the difference between an engineer who uses AI effectively and one who does not is not 30%. It is 500%. Pay structures will eventually reflect this variance.

The Klarna Lesson

It is worth pausing on Klarna’s experience as a cautionary tale about the pace of this transition.

Klarna made headlines by replacing 700 employees with AI, claiming that AI handled 75% of customer service interactions and projecting $40 million in profit gains. The story was held up as proof that AI could replace human workers wholesale.

Within a year, Klarna’s CEO acknowledged that the aggressive replacement strategy had prioritized cost reduction over quality, resulting in measurably lower service quality. The company reversed course and began rehiring humans in a hybrid model.

The lesson is not that AI replacement does not work. It is that replacement without thoughtful integration produces inferior results. The organizations succeeding with AI are not the ones that fired everyone and replaced them with agents. They are the ones that restructured how humans and agents work together, keeping human judgment in the loop while using agents to handle implementation, routine processing, and scale.

This applies directly to engineering organizations. The goal is not to replace developers with AI. It is to transform what developers do: from writing code to orchestrating agents, from producing output to ensuring quality, from implementing solutions to defining problems. The organizations that approach this as a cost-cutting exercise will have their Klarna moment. The ones that approach it as a capability transformation will build something far more powerful than either human-only or AI-only teams could achieve.

The Talent Market in Transition

For individual developers, the implications of this shift are both threatening and empowering.

The threat is obvious. If you have built your career around the ability to write code quickly and correctly, that skill is rapidly depreciating. Code production is being commoditized. The value of knowing syntax, memorizing APIs, and writing algorithms from scratch is declining toward zero.

The empowerment is that the skills replacing code production, specification engineering, system design, quality judgment, domain expertise, are harder to automate and more valuable to organizations. A developer who can define a problem precisely, direct agents to solve it, and validate that the solution is correct and secure is more valuable today than a developer who can only implement solutions manually. And the gap will widen.

The practical advice for developers at every level is the same: invest in the skills that AI amplifies rather than the skills AI replaces. Learn to write precise specifications. Develop deep domain expertise in an industry or problem space. Build judgment about system architecture and design trade-offs. Get comfortable directing agents and reviewing their output critically. These are the skills that define the orchestrator role, and they are the skills that will command premium compensation as the market restructures.

For developers early in their careers, the transition is harder because they have not yet had the opportunity to develop the experience and judgment that the orchestrator role requires. The best path forward is to seek organizations that are investing in structured talent development, that are deliberately creating opportunities for junior engineers to develop orchestration skills under senior supervision. These organizations exist, and they will increasingly differentiate themselves in the talent market by offering genuine career development in the new paradigm.

The Paradox of Automation

There is a well-known paradox in automation research: the more reliable the automated system, the harder it is for the human operator to detect and correct its failures, because the human’s skills atrophy from disuse.

Software engineering is entering this paradox. As AI agents handle more of the implementation work, developers get fewer opportunities to develop the deep understanding of code behavior that enables effective review and debugging. The system works well in steady state, but when it fails, the human operator may lack the understanding needed to diagnose and fix the failure.

This is why the transition from coder to orchestrator cannot be a simple substitution. It must be accompanied by new forms of engagement with the code: not writing it from scratch, but studying agent output deeply, understanding why agents made the choices they made, building mental models of system behavior through active review rather than active implementation.

The developers who thrive in this transition will be the ones who remain deeply curious about how things work, even when they are not the ones building them. They will read agent-generated code not to rubber-stamp it but to understand it. They will push back on agent decisions that seem questionable. They will maintain the technical depth that makes their judgment valuable, even as the day-to-day activity of typing code fades from their workflow.

Looking Forward

The generation of developers entering the workforce today is not the last generation of software engineers. It is the last generation of coders, in the narrow sense of people whose primary professional activity is translating logic into syntax.

What follows is a generation of orchestrators: engineers whose primary activity is defining problems, directing agents, and validating solutions. This is not a lesser role. It is a harder one. It requires everything that good coding required, understanding of data structures, algorithms, system design, security, performance, plus a layer of specification skill, judgment, and strategic thinking that coding alone never demanded.

The profession is not dying. It is growing up. The question is whether individuals, organizations, and educational institutions will grow with it, or whether they will cling to a model of software development that is already fading into history.

The agents are here. The code writes itself. The question is who will direct it, and whether they will direct it well. That is the job now. That is the only job that matters.